Archive

Cube Wackiness – Two Different Internal Errors

A couple of weeks ago, I posted a blog entry that demonstrated my ignorance on how cube space was defined in SSAS. Starting my BI career with Essbase, I still think in the block storage mode used by that platform. One of the strengths that Essbase had was that attributes could be created on stored members without requiring the cube to be reprocessed. I thought that an SSAS attribute which had its AttributeHierarchyEnabled property set to False would behave identically. That thinking is incorrect and even disabled attributes help define the cube space.

That feature worked great for me at the time as what once was a disabled attribute, through a significant shift in reporting requirements, now needed to be enabled. Re-enabling the attribute and deploying the changes, surprisingly to me, did not require that the dependent cubes be reprocessed. However, I had made a serious PEBKAC error. I changed the AttributeHierarchyEnabled property on the database dimension and also in the cube dimension of only one of my cubes. I had a linked measure group to another cube where the attribute hierarchy wasn’t re-enabled. SSAS let me go on so I thought everything was okay.

This week a very simple query on the linked measure group was run using this newly re-enabled hierarchy that was still disabled on the source cube.

SELECT {

[Measures].[Measure from Linked Measure Group]

} ON COLUMNS,

{

[Dimension].[Re-enabled Attribute].[Re-enabled Attribute].Members

} ON ROWS

FROM [Cube with Linked Measure Group]

WHERE (

[Date].[By Calendar].[2009]

)

which returned the following:

Internal error: An unexpected error occurred (file ‘mdsubcube.cpp’, line 6260, function ‘MDSubcube::IsPartiallyCoveringCell’).

What’s also strange is when I changed the year from 2009 to 2010, a different error was returned.

Internal error: An unexpected error occurred (file ‘pfstore.cpp’, line 822, function ‘PFStore::CopyRecords’).

Apparently, when using linked measure groups, SSAS doesn’t like the situation when an attribute is enabled in one cube but not the other. When I enabled the attribute hierarchy in both cube dimensions, the errors were resolved.

Do I really need a data warehouse?

I recently received a link to a BI Requirements for IT white paper from Tableau Software and SQL Server magazine. Though most of the points I strongly agree with, the paper seems to suggest that building a data warehouse is simply unnecessary. Other than the fact that operational systems that run the business and the decision support systems from which the business is analyzed should not share the same physical resources, there is a fundamental mathematical reason data is stored redundantly in a well-architected IT data infrastructure.

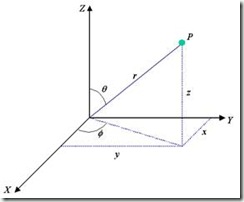

Sorry to sound like a pure academic here, but it comes with being in school way too long. And I do mean, way, way, way too long. Like my academic training in chemical physics, data warehousing is also essentially applied elementary linear algebra. So as a simple example, consider the description of a point in space as shown in the figure. The most familiar representation is in a Cartesian or xyz coordinate system. In this case, a point is defined by specifying the distance along the x axis, along with the distance along the y-axis, and the height along the z-axis. In a real world implementation, the x and y coordinates would represent the distance along two walls of a room and the z-axis the distance from the floor.

However, a Cartesian coordinate system is not the only possible representation. Spherical coordinates could also be used. In this case, the point is defined by specifying the distance of a radial vector from the origin, r, and two angles, Θ and Φ. In both Cartesian and spherical coordinates, all three axes are orthogonal or mutually independent. Additionally, a linear transformation can be applied to convert one representation to the other.

Is one representation better than another? The simple answer is it depends. If the physical problem being addressed is solving an equation of motion subjected to a linear force, Cartesian coordinates would be more appropriate. Likewise, if the problem being addressed is the description of a Kepler orbit, spherical coordinates are more appropriate.

So what does this have to do with business systems? In an oversimplified description, there are two principle data models used in business applications. A normalized model is applied when there should be no redundancies in storage and is most appropriate for capturing business transactions. It’s generally a poor model when applied to addressing analysis of a business due to the complexity of the model and the amount of physical resources needed to address joining resources from disparate data sources. Therefore, rather than use the same data model for analyzing a business, a dimensional model as described by Ralph Kimball is more appropriate. And, as a linear transformation can be applied to convert from a Cartesian to a spherical coordinate system, a linear transformation (SQL Server Integration Services if using the Microsoft BI tool stack) can be applied to transform between the normal model and a dimensional model.

I know this is long and boring. However, to answer the question posed in the title, drawing an analogy with a simple physical problem, a data warehouse is absolutely essential.

AttributeHierarchyEnabled and Cube Space Definition

Sometimes I fall into the trap of assuming one product behaves like another. For instance, with Essbase, you could add an attribute hierarchy (really member properties) without requiring reprocessing of the cube. I thought the same with Analysis Services when an attribute was added to a cube dimension and the AttributeHierarchyEnabled property was set to false. BOL also seems to lead me to that conclusion. I would have thought that an attribute with the AttrbiuteHierarchyEnabled property set to false, since an attribute hierarchy isn’t created, would not have any impact on the cube space definition. However, if one adds a new disabled attribute hierarchy to a cube, it does require reprocessing of all measure groups related to that dimension.

To my delight this past week, I had to change the AttributeHierarchyEnabled property from False to True. I thought I would have to reprocess the entire cube. However, I was wrong. All I did was change the property value, deploy the changes, and a new attribute hierarchy was visible. Apparently, even attribute hierarchies with this property set to false still help to define the cube space.

Five Things SSAS Should Drop

The trend started with a thread entitled Five Things SQL Server Should Drop a few months back. Naturally, that post triggered a similar response in SSIS by Jamie Thomson and Todd McDermid and in SSRS by David Leibowitz. Nearly three months later and there still doesn’t exist a similar meme for SQL Server Analysis Services (SSAS), at least not one that comes back in Google or Bing. So here to complete the set are five things I believe should be dropped from SSAS.

LookupCube and NonEmptyCrossJoin MDX functions

I realize that these MDX functions exists for backward support. The NonEmptyCrossJoin function was a kludge when it was first introduced. All of its functionality has been incorporated into the NonEmpty function in the latest versions of SQL Server. Its very existence needs to be eradicated from everyone’s memory. The LookupCube function is just plain scary. Though I guess it may have some problems that are resolvable with its use, if you have to use it, it’s probably signifying a much larger design problem that would be better addressed through the use of linked measure groups. The use of the LookupCube function in the calculations tab of a cube is one way to degrade the performance of an otherwise well-designed cube.

Cell Level Security

Another way to absolutely destroy the performance of an otherwise well-designed cube is through the use of cell level security. Why? Use of cell-level security disables the global cache which usually handles the most frequently requested queries. But, how else do you handle security requirements that need to be addressed by OR conditions? (i.e. Users need to be able to see Company X or all accounts that begin with 9.) Rather than use cell-level security, the use of a many-to-many relationship is a much better option. Though M2M relationships have their own issues, the use of a M2M relationship in this situation will involve a relatively small intermediate measure group that will usually be highly performing, at least much more highly performing than the use of cell-level security. And also, since the requirements are handled by a relational table, much more maintainable also.

Unknown Member

Unknown members were created to handle situations where NULLS exist for a dimension reference in a related fact table. Since I don’t believe a data warehouse should allow NULLs, I don’t like this property at all. Instead, if you have a missing dimension reference in a fact table, replace that instance with a pointer to a ‘Not Available’ member in your ETL. Doing so follows best practices also. I know I’ll likely not ever use this property. Even if I inherit a poorly designed warehouse that does have NULLs, I would correct these references in the DSV or server based views if left with no better option.

Server Time Dimension

Again, I don’t like that this feature is even available. It just leads to bad practices and misunderstandings. A well-designed data warehouse should have a well-formed date dimension that can easily and gracefully address ever changing analytical requirements. The use of a server-based date dimension will not.

Named Queries in the Data Source View

Named queries in the DSV aren’t necessarily evil. However, heavy use of named queries in the DSV could transform a well-formulated solution into an unmaintainable mess. The logic addressed by named queries is better addressed by incorporating into database engine views, or better yet, in the ETL and storage engine if that logic is complex.

I’m sure that this post, if anyone actually reads it, will be disagreeable to some.

Later, Martin